In this post we will look at how to start a download and install Kafka on a local system, with multiple Kafka brokers.

1.0 Download and Install Kafka

- Download Kafka from – https://kafka.apache.org/downloads

- Extract it to a folder (any folder of your choice)

tar -xvf kafka_2.13-2.7.0.tgz- Add Kafka

bindirectory toPATHenvironment variable. e.g.

export PATH="~/kafka_2.13-2.7.0/bin:$PATH"2.0 Starting Zookeeper

Zookeeper is essential to starting and running Kafka.

For our local kafka setup, we will just start one node of Zookeeper.

- First go to the

configfolder inside Kafka installation, and modify thezookeeper.properties - For now we will just modify the

datadirproperty – whose default value is/tmp/zookeeper. - We are changing it from

/tmpdirectory, to ensure that the zookeeper data is persisted and re-used when we restart our zookeper server.

cd ~/kafka_2.13-2.7.0/config

# Take a backup of existing properties before making any change

cp zookeeper.properties zookeeper.properties.bkp

sed -i '' 's:dataDir=/tmp/zookeeper:dataDir=~/kafka_2.13-2.7.0/data/zookeeper:g’ zookeeper.propertiesNow we are done with all the changes to zookeeper.properties, and we can now start our Zookeper server using following command.

zookeeper-server-start.sh ~/kafka_2.13-2.7.0/config/zookeeper.propertiesYou can see something like this below – Zookeper starting and running on port 2181

Keep the zookeper running for Kafka to work. Don’t close the console window where you started Zookeeper.

3.0 Start a single Kafka broker

Just as we modified few configurations for Zookeeper, we will also modify few properties for Kafka server.properties

log.dirs– We will modify thelog.dirsto some other folder other than/tmp/kafka-logs, to ensure that server data is persisted between server restartsnum.partitions– By default the number of partitions per topic is set to1. We will set to a higher number.

cd ~/kafka_2.13-2.7.0/config

# Take a backup of existing properties before making any change

cp server.properties server.properties.bkp

# change log.dirs

sed -i '' 's:log.dirs=/tmp/kafka-logs:log.dirs=~/kafka_2.13-2.7.0/data/kafka-logs:g' server.properties

# set num.partitions to 3

sed -i '' 's:num.partitions=1:num.partitions=3:g' server.propertiesNow we will start a Kafka server (using the properties from server.properties)

kafka-server-start.sh ~/kafka_2.13-2.7.0/config/server.propertiesYou can see something like this below

As you can see, a broker with id=0 was started.

We will see later how we can configure and change the broker id also.

4.0 Start multiple kafka brokers

Now lets start another kafka broker, using the same command

kafka-server-start.sh ~/kafka_2.13-2.7.0/config/server.propertiesYou will get an error like this – org.apache.kafka.common.KafkaException: Failed to acquire lock on file .lock in /Users/in-sunit.chatterjee/kafka_2.13-2.7.0/data/kafka-logs. A Kafka instance in another process or thread is using this directory.

This is because this is the default log.dirs specified in the server.properties. And there is also a kafka broker with id=0 running that has created data into this directory

To resolve this issue, we need to specify a different log directory for new kafka broker.

We can do this by overriding the log.dirs in the command line, using the --override flag

kafka-server-start.sh \

~/kafka_2.13-2.7.0/config/server.properties \

--override log.dirs=~/kafka_2.13-2.7.0/data/kafka-1-logsNow you will get a different error – org.apache.kafka.common.KafkaException: Socket server failed to bind to 0.0.0.0:9092: Address already in use.

This is because there is already an kafka server running on port 9092, and we need to specify a different port.

In addition to overriding the port, we will also override the broker.id for the new kafka server

kafka-server-start.sh \

~/kafka_2.13-2.7.0/config/server.properties \

--override broker.id=101 \

--override listeners=PLAINTEXT://:9093 \

--override port=9093 \

--override log.dirs=~/kafka_2.13-2.7.0/data/kafka-1-logsAbove command will start a kafka broker on port 9093 and with broker.id 101

5.0 Using a custom script to start multiple Kafka brokers.

As you can see above, you will need to override multiple properties if you want to start multiple kafka brokers on same host machine.

To simplify the process – save following script as kafka-server-start-multiple.sh, and in your <kafka-server>/bin directory.

if [ $# -lt 1 ]; then

echo 1>&2 "$0: Not enough arguments. Starting kafka broker with default values."

kafka-server-start.sh ~/kafka_2.13-2.7.0/config/server.properties

else

DEFAULT_PORT=9092

PORT=$(($DEFAULT_PORT + $1))

BROKER_ID=$1

LOG_LOCATION=~/kafka_2.13-2.7.0/data/kafka-$BROKER_ID-logs

echo "Starting Kafka Server with BrokerId=$BROKER_ID on port=$PORT"

kafka-server-start.sh ~/kafka_2.13-2.7.0/config/server.properties --override broker.id=$BROKER_ID --override listeners=PLAINTEXT://:$PORT --override port=$PORT --override log.dirs=$LOG_LOCATION

fiMake this script executable

chmod +x ~/kafka_2.13-2.7.0/bin/kafka-server-start-multiple.sh- This script will be able to start 1-10 kafka brokers on your local machine

- If no arguments are provided it will start the default kafka broker with default properties.

kafka-server-start-multiple.sh- To start multiple kafka server, just pass a numbered index.

The script will just add the index to the defaultportand also use it asbroker.id. e.g.

# Start server on with broker.id=1 and on port 9093, and log.dirs=~/kafka_2.13-2.7.0/data/kafka-1-logs

kafka-server-start-multiple.sh 1

# Start server on with broker.id=2 and on port 9094, and log.dirs=~/kafka_2.13-2.7.0/data/kafka-2-logs

kafka-server-start-multiple.sh 2

# Start server on with broker.id=3 and on port 9095, and log.dirs=~/kafka_2.13-2.7.0/data/kafka-3-logs

kafka-server-start-multiple.sh 3

and so on...6.0 Test your kafka cluster

Create a topic

kafka-topics.sh --bootstrap-server localhost:9092 --topic demo_topic_1 --create --partitions 4 --replication-factor 2Ensure you have atleast as many brokers running, as the replication-factor provider by you in above command.

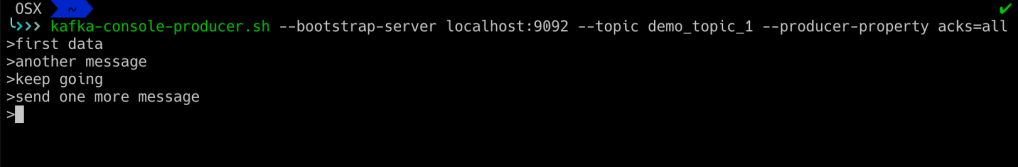

Produce data to a topic (provide address of any one broker as the bootstrap-server)

kafka-console-producer.sh --bootstrap-server localhost:9092 --topic demo_topic_1 --producer-property acks=allYou will get a prompt where you can send/produce data.

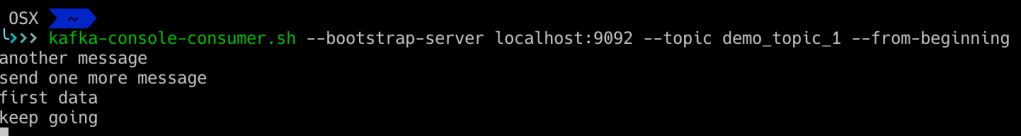

Consume data from topic

kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic demo_topic_1 --from-beginning

With this we come to end of our post. We have seen so far how to start and run multiple kafka brokers on your local machine.